I’m often asked what invention I think is the biggest in human history. I do not have one that is the biggest, but I have a short list:

1) Writing – once we learned how to codify knowledge, our progress accelerated tremendously

2) Computing – once we learned how to make complex calculations fast, we started to achieve the impossible – going to the Moon, communicating over the Internet, just to name a few.

3) AI – when we learned how to utilize advanced calculations to simulate intelligence, humanity achieved new heights

This book takes us through that kind of journey. It does add a few more steps, like the invention of binary calculations, the Internet, Google, etc., but in essence, it does follow the same pattern.

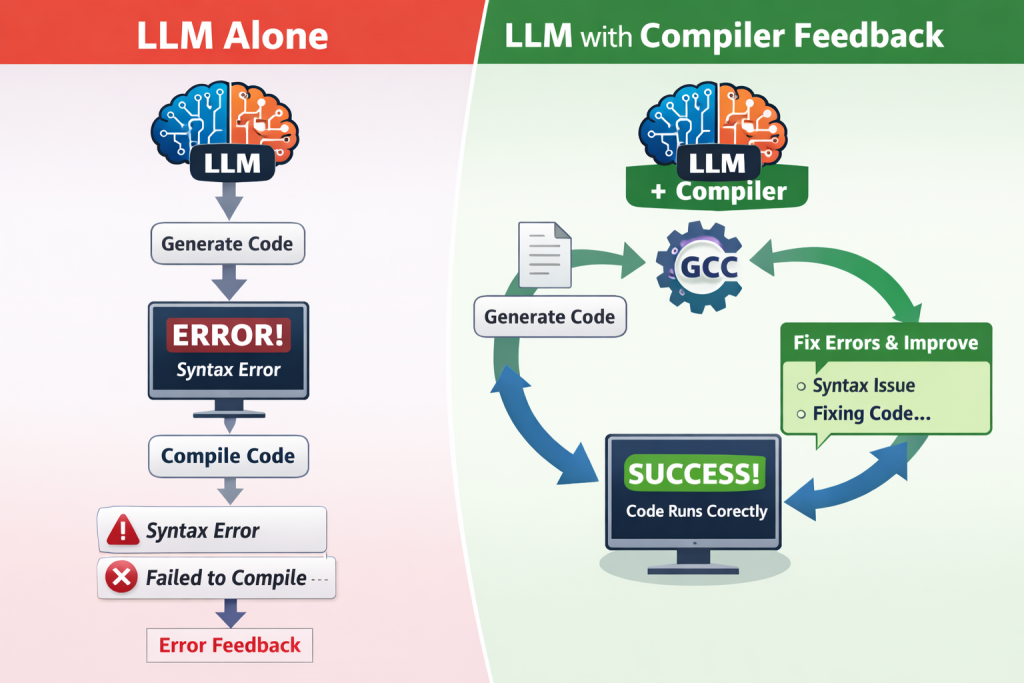

What the book does not cover, and what I often wonder about, is the invention of the compiler. Compilers, especially for higher-level programming languages like C, provided the abstraction needed to decouple the nitty-gritty details of computer architectures from the problems we want to solve.

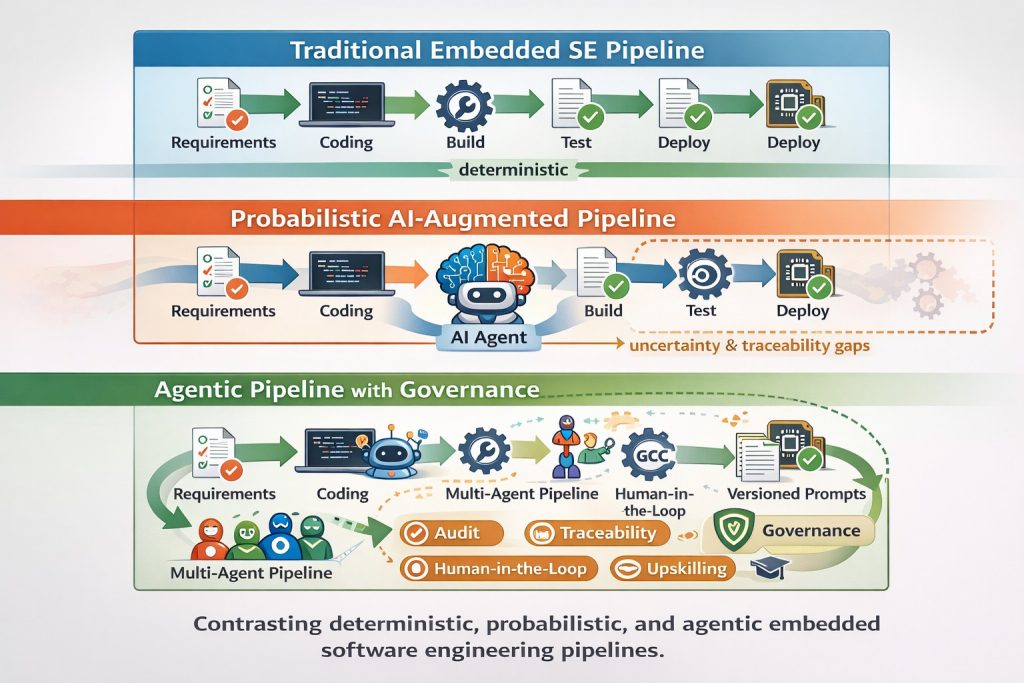

We see a similar development today with LLMs and Agentic AI. It decouples the details of programs from the intents and requirements of the user. We do not need to know anything about programming to create software that does things for us. Product owners can create prototypes, requirements engineers can test their hypotheses, testers can ensure that they do not miss important corner cases – the examples can be multiplied, and that’s just software engineering.

This does not mean that software engineering is solved, as Nvidia’s CEO put it, it means that it has changed. It’s probably the most fun time to be a software engineer as we can start solving really difficult questions without the need to lose time for details of the implementations. We also need the knowledge how to design systems based on AI – how to engineer them (BTW: if you are interested in this, here is my latest book that will help you: Link).

I recommend Tom Wheeler’s book to anyone interested in the story of how we invented AI in the first place.