Image by Vinson Tan ( 楊 祖 武 ) from Pixabay

https://ieeexplore.ieee.org/document/10978937

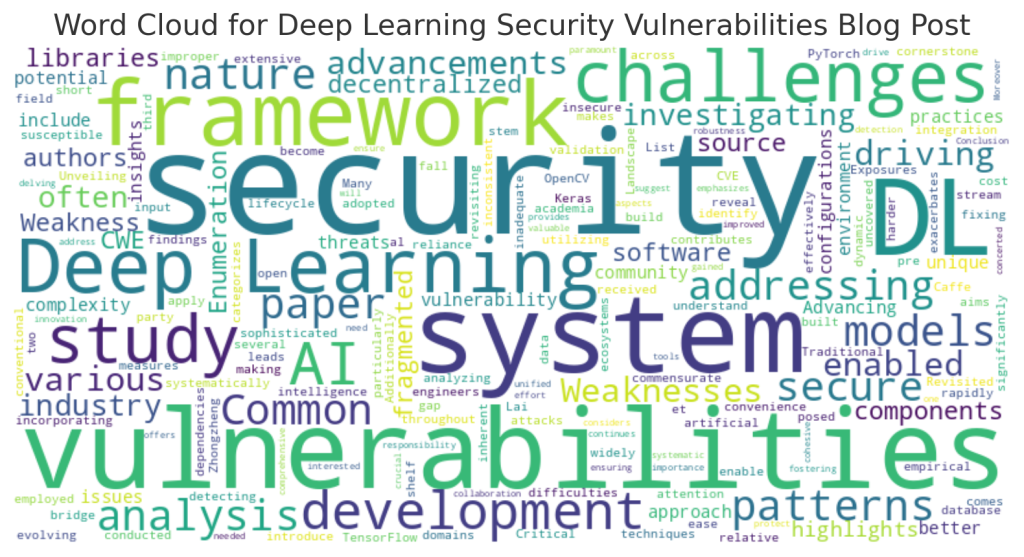

We’ve all seen Large Language Models (LLMs) write impressive snippets of code or debug a tricky function. But can an AI actually understand the soul of a system? Can it explain the “why” behind a complex architectural decision?

The paper, “Do Large Language Models Contain Software Architectural Knowledge? An Exploratory Case Study with GPT,” puts this to the test. Researchers did a study with 14 software engineers to see if GPT could navigate the Architectural Knowledge (AK) of a massive, real-world system: the Hadoop Distributed File System (HDFS).

The Experiment: AI vs. The Ground Truth

Engineers grilled GPT with questions ranging from basic component identification to deep design rationales. Their answers were then compared against a verified “ground truth” of HDFS documentation.

The Results

The study revealed a fascinating dichotomy in GPT’s performance: Recall was ok: GPT is surprisingly good at “remembering” things. It showed moderate recall, meaning it could often identify the correct architectural components and general concepts buried in its training data. Precision was really bad (guessing is much better): It struggled with accuracy. The model often suffered from lower precision, frequently providing answers that sounded authoritative but were technically incorrect or “hallucinated.”

When asked about design rationales (why a specific solution was chosen) or quality attribute solutions, GPT’s performance dipped significantly. It can tell you what is there, but it struggles to explain the engineering trade-offs.

The Takeaway for Architects

The engineers in the study rated GPT’s trustworthiness as only moderate. The verdict is clear: GPT is a fantastic tool for initial discovery and brainstorming, but it cannot be used as a source of truth for critical system design.

The Bottom Line is to treat LLMs as junior architects with a photographic memory but a shaky grasp of logic. They are great for a first draft, but expert human validation remains the most important step in the process.