Concerns identified in code review: A fine-grained, faceted classification – ScienceDirect

Code reviews are time consuming. And effort intensive. And boring. And needed. Depending whom we ask, we get one of the above answers (well, 80% of the time). The reality is that the code reviews are not the most productive activity. Reading the code and looking for defects is good when we do it once, but when we need to work with it during continuous integration, the story changes. It becomes like studying for the exam or the homework – we do everything else to postpone it. Then someone waits longer or the code quality suffers.

There has been a lot of work done to make this activity more fun – gamification, automated support, using machine learning to filter out the code that we can automatically check – just to name the few. As far as I know, there has not been much work in understanding of what kind of problems code reviews really find.

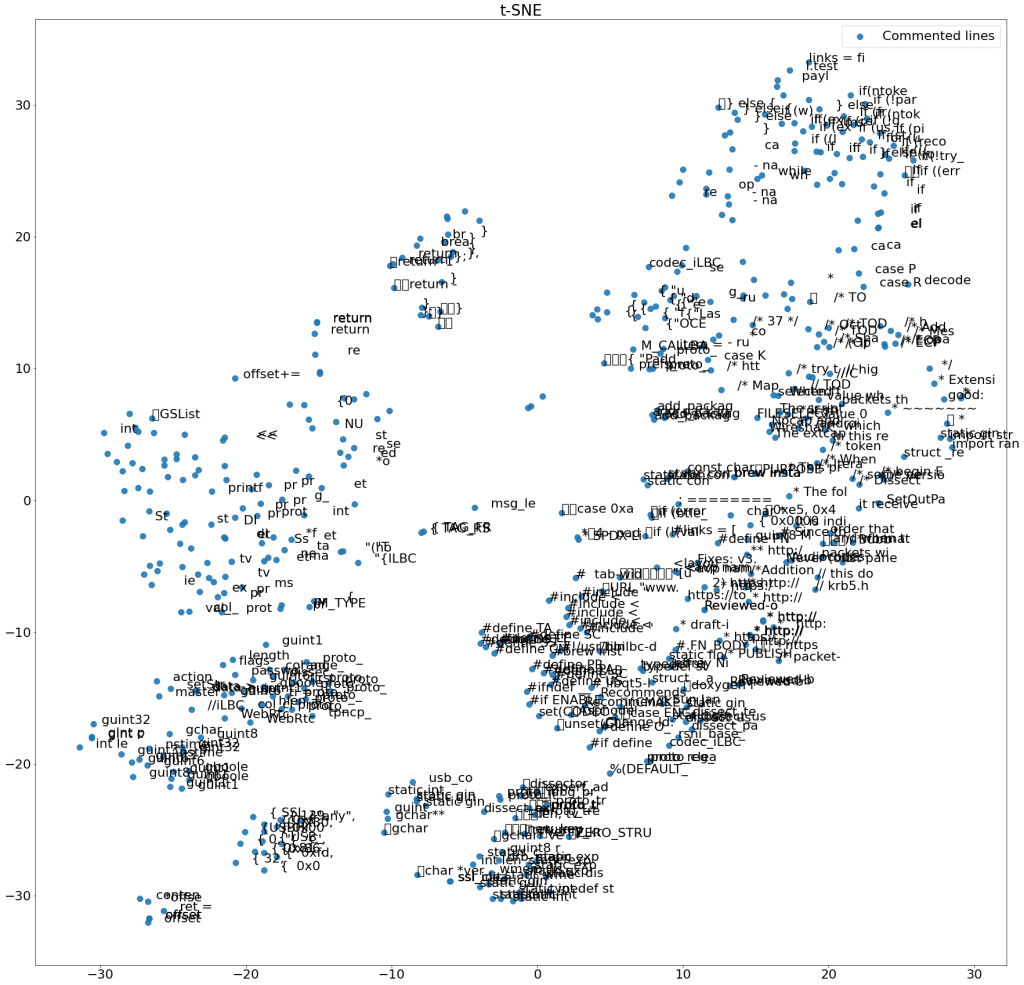

In this article, the authors address that very question. Admittedly, they only analyzed 7 OSS projects, but their results are still interesting: “We identified 116 defect types that we grouped into 15 groups to create a defect classification. Additionally, 38% of these defects could be automatically detected accurately. “

So, that basically means that 38% of defects could be identified by using testing or static analysis (or some other fancy automation technique). This figure summarizes their results (this is a link to the figure in sciencedirect): https://ars.els-cdn.com/content/image/1-s2.0-S0950584922001653-gr5_lrg.jpg

So, what the code reviews are good for? Here is their list:

- threads,

- header comments,

- errors, warnings and logging,

- test cases,

- annotations,

- performance,

- identifier naming,

- modifiers,

- comments,

- javadoc,

- design,

- implementation, and

- logic and functionality

The list is sorted from the least frequent to the most frequent – so logic and functionality is the category where the code reviews are the most useful for. I need to also say that the frequencies are not super-high – threading is only 1 detected concern, while logic and functionality has 57. So, you know, could be more, given how much time is spent on code reviews. I guess it is what the quality costs nowadays, even though there is no real data on this.